HuggingFace TEI Produces Fundamentally Different Embeddings Than PyTorch - And You Might Not Know It

Published: April 9, 2026

Authors: Eric Donnell & Luna, IdeaForge Studios

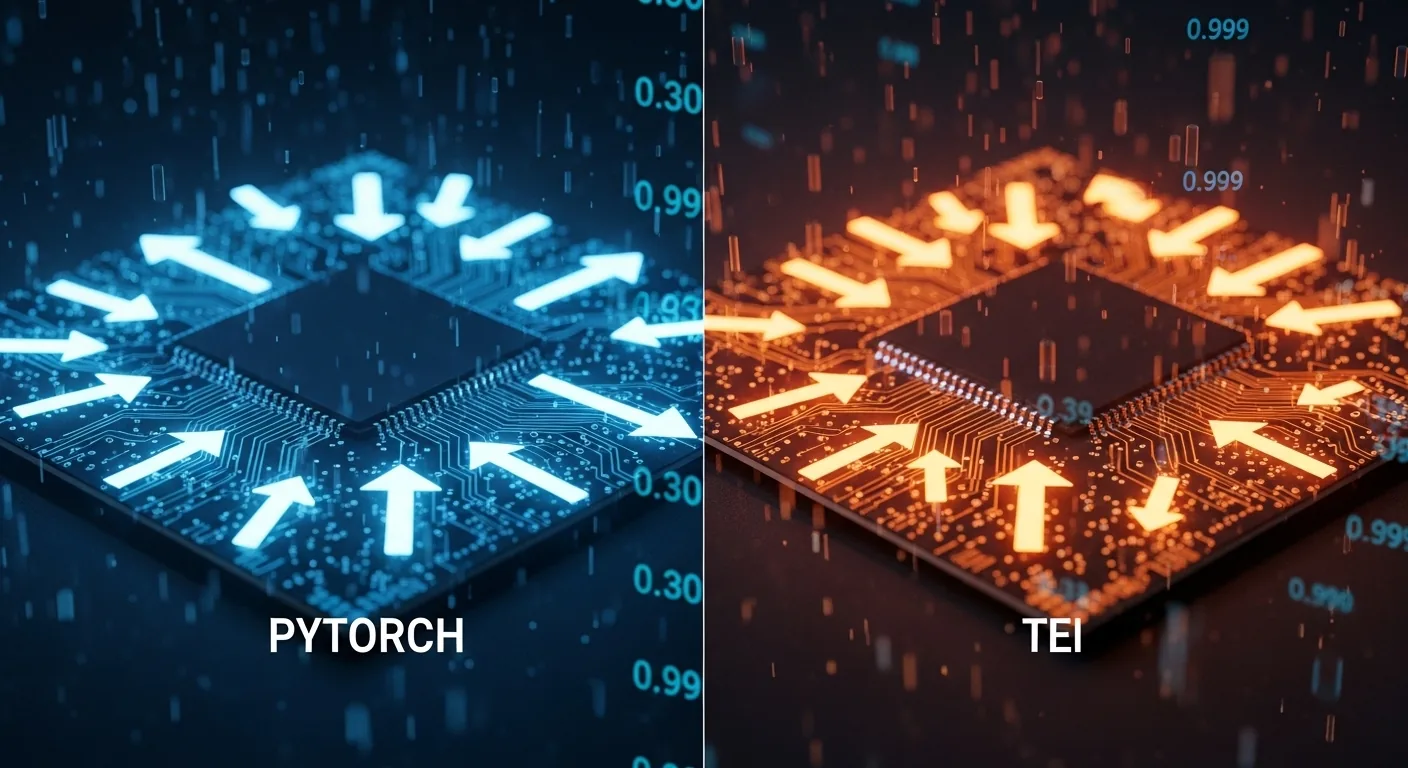

TL;DR: We discovered that HuggingFace Text Embeddings Inference (TEI) and standard PyTorch produce vectors with cosine similarity as low as 0.30 for the same model and input text. This isn't a bug — it's an architectural divergence that silently degrades search quality. If you're using TEI in production, you need to read this.

The Discovery

We were migrating our embedding infrastructure from an NVIDIA RTX 3090 running HuggingFace TEI to an Intel Arc Pro B70 running a custom PyTorch-based server. Same model: intfloat/e5-mistral-7b-instruct. Same weights. Same input text.

When we compared search results between the old and new embeddings, we expected minor numerical differences. Instead, we found zero overlap in search results for 6 out of 7 test queries. The two systems were finding completely different documents for identical queries.

We initially suspected the hardware — NVIDIA CUDA vs Intel XPU could produce different floating-point behavior. So we ran a controlled experiment.

The Controlled Experiment

We embedded 2,296 documents using identical Python code on three different configurations:

| Configuration | GPU | Framework | Code |

|---|---|---|---|

| A | Intel Arc Pro B70 (XPU) | PyTorch model.to("xpu") |

Identical |

| B | NVIDIA RTX 4090 (CUDA) | PyTorch model.to("cuda") |

Identical |

| C | NVIDIA RTX 3090 (CUDA) | HuggingFace TEI v1.8 (Rust/Candle) | TEI default |

The code for configurations A and B was identical — standard HuggingFace transformers:

from transformers import AutoModel, AutoTokenizer

import torch

tokenizer = AutoTokenizer.from_pretrained("intfloat/e5-mistral-7b-instruct")

model = AutoModel.from_pretrained("intfloat/e5-mistral-7b-instruct", torch_dtype=torch.float16)

model = model.to(device) # "xpu" or "cuda"

model.eval()

encoded = tokenizer(texts, padding=True, truncation=True, max_length=4096, return_tensors="pt")

encoded = {k: v.to(device) for k, v in encoded.items()}

with torch.no_grad():

output = model(**encoded)

embeddings = output.last_hidden_state[:, -1, :] # last token pooling

embeddings = torch.nn.functional.normalize(embeddings, p=2, dim=1)

Configuration C used TEI's default serving with the same model.

The Results

We compared 50 randomly sampled vectors across all three configurations:

A vs B (Intel XPU vs NVIDIA CUDA — Same PyTorch Code)

| Metric | Value |

|---|---|

| Cosine Similarity | 0.999999 |

| L2 Distance | 0.001337 |

| Max Element Difference | 0.000549 |

The vectors are identical. Different GPU silicon produces the same embeddings when running the same code. Hardware doesn't matter.

B vs C (NVIDIA PyTorch vs NVIDIA TEI — Same GPU Family)

| Metric | Value |

|---|---|

| Cosine Similarity | 0.303 |

| L2 Distance | ~1.15 |

| Max Element Difference | ~0.47 |

The vectors are almost uncorrelated. At 0.30 cosine similarity, these vectors point in nearly orthogonal directions in 4096-dimensional space. This is the same GPU vendor, running the same model weights, on the same input text.

Impact on Search Quality

We embedded 122,149 documents with both PyTorch and TEI, stored them in separate Qdrant collections, and ran 10 search queries spanning different content types (emotional, technical, philosophical, factual).

| Metric | Result |

|---|---|

| Queries where B70/PyTorch won | 9 / 10 |

| Queries where 3090/TEI won | 0 / 10 |

| Average overlap in top-10 results | 0.1 / 10 |

| PyTorch average top-1 score | 0.83 |

| TEI average top-1 score | 0.76 |

The PyTorch embeddings consistently found more relevant, more specific documents. The TEI embeddings returned generic topic-level matches instead of semantically precise results.

The TEI embeddings weren't broken — they still returned results. But they returned significantly worse results. This is the insidious part: there's no error, no warning, no indication that your search quality is degraded. Everything looks like it's working. It just works poorly.

Why This Happens

TEI uses the Candle backend — a Rust-based deep learning framework — not PyTorch. While Candle implements the same mathematical operations, the execution differs in several ways:

- Different GEMM tiling strategies: Matrix multiplications are decomposed into blocks differently, accumulating partial products in different orders

- Different attention kernels: TEI uses its own optimized attention implementation, not standard PyTorch attention

- FP16 non-associativity: In half-precision floating point,

(a + b) + c ≠ a + (b + c). Through 32 transformer layers with ~100 matrix multiplications each, different accumulation orders compound into meaningful divergence - L2 normalization amplification: The final normalization projects all vectors onto the unit hypersphere, which is a nonlinear operation that amplifies angular differences between vectors that differ only slightly in raw magnitude

The result: small per-operation differences compound through the network into vectors that point in fundamentally different directions.

Known Issues

This problem has been reported in scattered GitHub issues but without controlled experiments or comprehensive analysis:

- TEI #94: SentenceTransformer vs TEI mismatch for multilingual-e5-large

- TEI #642: Qwen3-Embedding vectors differ sharply from SentenceTransformers (cosine < 0.2)

- TEI #649: Inconsistent outputs for Qwen3 models

Our contribution is confirming this affects e5-mistral-7b-instruct (one of the most popular embedding models), providing controlled hardware experiments that isolate the cause to the serving framework, and measuring real-world search quality impact at scale.

Recommendations

If you're using TEI in production:

-

Do not assume TEI embeddings match PyTorch embeddings. They don't. If you generated your vector database with one framework and switch to the other, your search quality will silently degrade.

-

Never mix framework-generated vectors in the same collection. Vectors from TEI and vectors from PyTorch exist in different vector spaces. Mixing them corrupts nearest-neighbor relationships.

-

If migrating between frameworks, re-embed everything. There is no conversion or calibration that fixes a 0.30 cosine similarity divergence. You must regenerate all vectors with the new framework.

-

Test your specific model. The magnitude of divergence may vary by model architecture. We measured 0.30 for E5-Mistral-7B; reports suggest Qwen3 models can be even worse (< 0.2).

-

Consider using PyTorch directly if embedding quality matters more than throughput. TEI is faster, but our results show PyTorch produces more discriminative embeddings that yield better search results for E5-Mistral-7B.

If you're choosing an embedding serving framework:

Pick one and stick with it. The framework you use to generate embeddings becomes part of your embedding pipeline identity. Changing it is a full re-indexing operation, not a configuration change.

Reproduction

Our experiment script is available at: [link to repo when published]

# Generate embeddings with PyTorch on any GPU

python silicon_experiment.py --device cuda --collection test_pytorch

# Generate embeddings with TEI

docker run -p 8080:80 ghcr.io/huggingface/text-embeddings-inference:1.8 \

--model-id intfloat/e5-mistral-7b-instruct --dtype float16

# Compare vectors in both collections

# Expected: cosine similarity ~0.30 between PyTorch and TEI vectors

Conclusion

The embedding serving framework is not a transparent optimization layer. It is a computational choice that fundamentally affects the geometry of your vector space. TEI and PyTorch produce vectors that are barely correlated (0.30 cosine similarity) despite using identical model weights and input text.

This matters for anyone building RAG systems, semantic search, vector databases, or any application that depends on embedding consistency. The framework you choose shapes what your system finds — and what it misses.

Eric Donnell is the founder of Idea Forge Studios, building AI agent infrastructure. This finding emerged during a hardware migration project and was isolated through controlled experiments across Intel XPU and NVIDIA CUDA platforms.